Attack your agents before

adversaries do

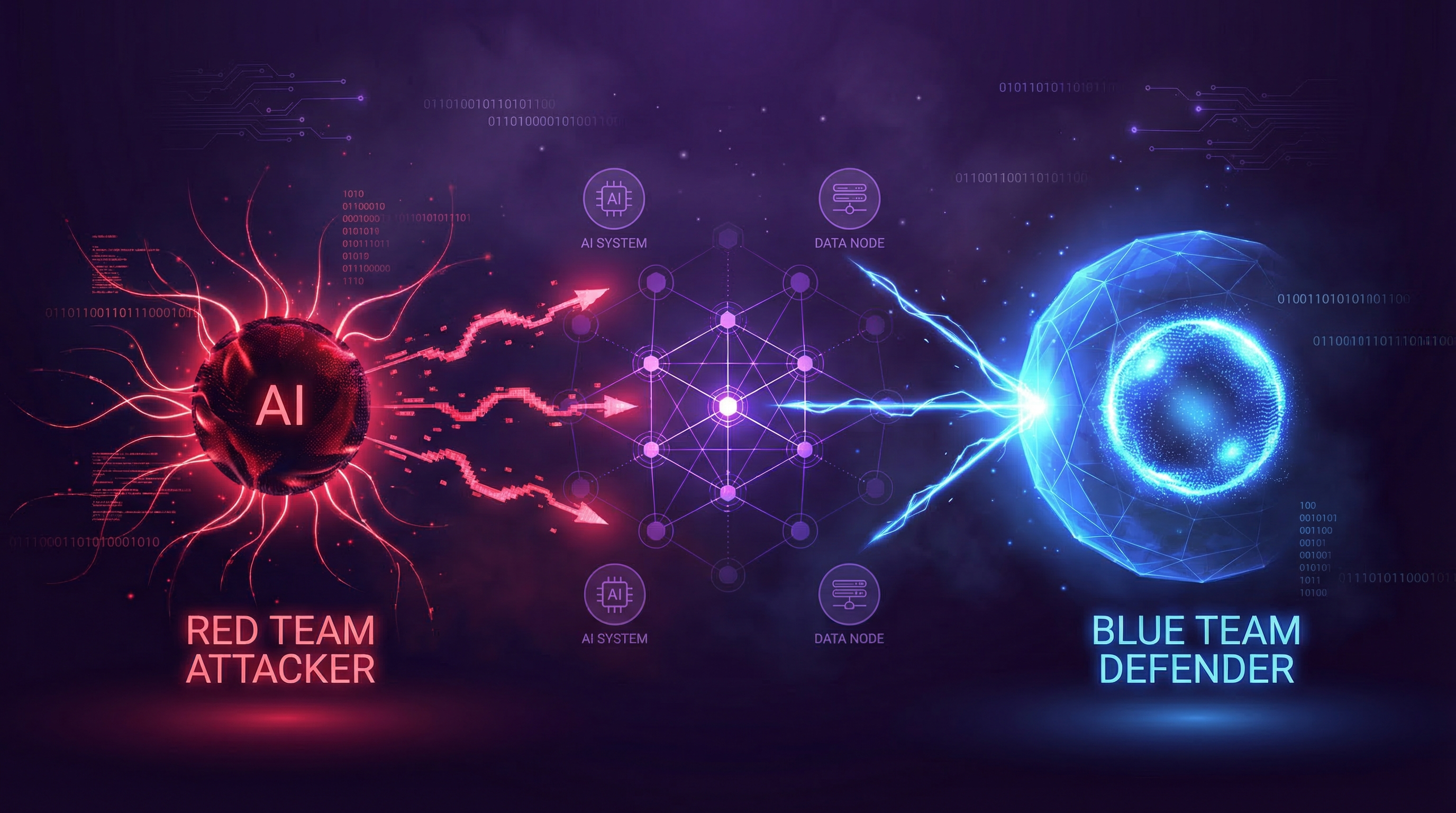

Aona's Red Team Agent probes every vulnerability in your AI agents — then the Blue Team Agent automatically patches them. A closed loop from discovery to remediation.

The Two Agents

Attack and defend, automatically

Red finds. Blue fixes. Then Red checks again. The loop runs continuously so your agents stay secure as they evolve.

Red Team Agent

Offensive — finds vulnerabilities

Runs hundreds of adversarial attack patterns against your deployed agents. Methodical, relentless, and always up to date with the latest agent attack techniques.

Blue Team Agent

Defensive — patches vulnerabilities

Analyses every finding from the Red Team and generates targeted fixes — guardrail patches, system prompt hardening, access control updates. Human approval before anything ships.

Guardrail Patching

Automatically generates and applies targeted guardrail rules for each discovered vulnerability.

System Prompt Hardening

Rewrites and strengthens system prompts to resist the specific attack patterns found by the Red Team.

Human-in-the-Loop Approval

Review every proposed fix before it ships. Full audit trail of what changed, why, and when.

Continuous Re-Verification

After every fix, the Red Team re-tests to confirm the patch held. Protection keeps pace as your agents evolve.

How It Works

From deployment to defence in minutes

Connect your agents, run the Red Team, review the Blue Team's patches. No manual pentesting, no waiting weeks for a security report.

Connect your agents

Point Aona at your deployed AI agents — any framework, any provider. OpenAI, Anthropic, custom MCP servers, LangChain, CrewAI.

Red Team runs attack battery

The Red Team Agent automatically probes your agents with hundreds of attack patterns — jailbreaks, injections, data leakage attempts, privilege escalation.

Vulnerability report generated

Every finding is documented: attack vector, severity, reproduction steps, and risk impact. No noise — only actionable vulnerabilities.

Blue Team patches automatically

The Blue Team Agent generates targeted guardrail patches and system prompt hardening for each finding. You review and approve.

Red Team verifies the fix

After each patch is applied, the Red Team re-runs to confirm the vulnerability is closed. The loop is complete.

The Problem

Agents are the new attack surface

Traditional security tools weren't built for AI agents. Firewalls don't stop prompt injection. DLP doesn't catch jailbreaks. Your agents need their own security layer.

Prompt injection attacks

Adversaries embed hidden instructions in data your agents read — emails, documents, web pages — hijacking agent actions without you knowing.

Multi-agent trust exploits

When agents talk to agents, trust relationships become attack vectors. A compromised upstream agent can poison the entire chain.

Guardrail bypasses

Most guardrails are written once and never stress-tested. Adversaries iterate. Your guardrails need to iterate too.

Don't wait for a breach to test your agents

Book a demo and see the Red Team Agent in action — watch it find real vulnerabilities in your agents, then watch the Blue Team close them.